Framelink MCP for Figma

Enables AI coding agents to access Figma design data by fetching and simplifying layout and styling metadata. This allows developers to accurately implement UI designs in code directly from Figma file links.

README

<a href="https://www.framelink.ai/?utm_source=github&utm_medium=referral&utm_campaign=readme" target="_blank" rel="noopener"> <picture> <source media="(prefers-color-scheme: dark)" srcset="https://www.framelink.ai/github/HeaderDark.png" /> <img alt="Framelink" src="https://www.framelink.ai/github/HeaderLight.png" /> </picture> </a>

<div align="center"> <h1>Framelink MCP for Figma</h1> <h3>Give your coding agent access to your Figma data.<br/>Implement designs in any framework in one-shot.</h3> <a href="https://npmcharts.com/compare/figma-developer-mcp?interval=30"> <img alt="weekly downloads" src="https://img.shields.io/npm/dm/figma-developer-mcp.svg"> </a> <a href="https://github.com/GLips/Figma-Context-MCP/blob/main/LICENSE"> <img alt="MIT License" src="https://img.shields.io/github/license/GLips/Figma-Context-MCP" /> </a> <a href="https://framelink.ai/discord"> <img alt="Discord" src="https://img.shields.io/discord/1352337336913887343?color=7389D8&label&logo=discord&logoColor=ffffff" /> </a> <br /> <a href="https://twitter.com/glipsman"> <img alt="Twitter" src="https://img.shields.io/twitter/url?url=https%3A%2F%2Fx.com%2Fglipsman&label=%40glipsman" /> </a> </div>

<br/>

Give Cursor and other AI-powered coding tools access to your Figma files with this Model Context Protocol server.

When Cursor has access to Figma design data, it's way better at one-shotting designs accurately than alternative approaches like pasting screenshots.

<h3><a href="https://www.framelink.ai/docs/quickstart?utm_source=github&utm_medium=referral&utm_campaign=readme">See quickstart instructions →</a></h3>

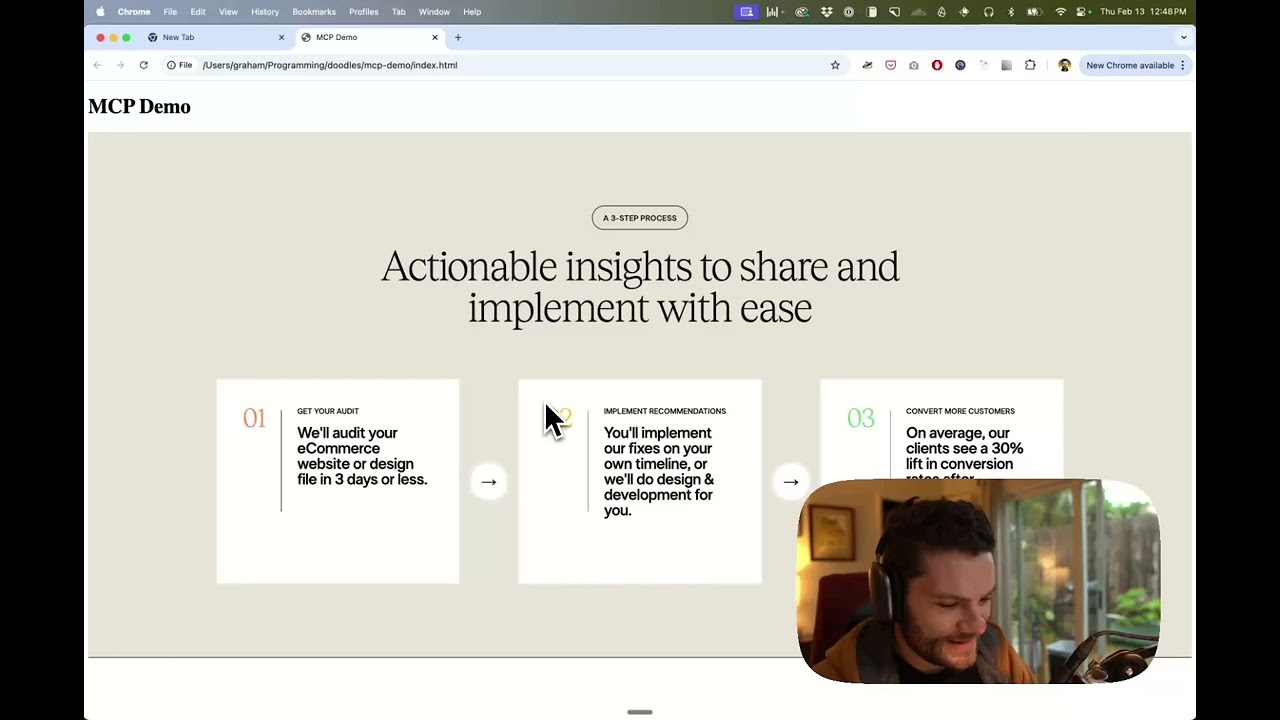

Demo

Watch a demo of building a UI in Cursor with Figma design data

How it works

- Open your IDE's chat (e.g. agent mode in Cursor).

- Paste a link to a Figma file, frame, or group.

- Ask Cursor to do something with the Figma file—e.g. implement the design.

- Cursor will fetch the relevant metadata from Figma and use it to write your code.

This MCP server is specifically designed for use with Cursor. Before responding with context from the Figma API, it simplifies and translates the response so only the most relevant layout and styling information is provided to the model.

Reducing the amount of context provided to the model helps make the AI more accurate and the responses more relevant.

Getting Started

Many code editors and other AI clients use a configuration file to manage MCP servers.

The figma-developer-mcp server can be configured by adding the following to your configuration file.

NOTE: You will need to create a Figma access token to use this server. Instructions on how to create a Figma API access token can be found here.

MacOS / Linux

{

"mcpServers": {

"Framelink MCP for Figma": {

"command": "npx",

"args": ["-y", "figma-developer-mcp", "--figma-api-key=YOUR-KEY", "--stdio"]

}

}

}

Windows

{

"mcpServers": {

"Framelink MCP for Figma": {

"command": "cmd",

"args": ["/c", "npx", "-y", "figma-developer-mcp", "--figma-api-key=YOUR-KEY", "--stdio"]

}

}

}

Or you can set FIGMA_API_KEY and PORT in the env field.

If you need more information on how to configure the Framelink MCP for Figma, see the Framelink docs.

Star History

<a href="https://star-history.com/#GLips/Figma-Context-MCP"><img src="https://api.star-history.com/svg?repos=GLips/Figma-Context-MCP&type=Date" alt="Star History Chart" width="600" /></a>

Learn More

The Framelink MCP for Figma is simple but powerful. Get the most out of it by learning more at the Framelink site.

推荐服务器

Baidu Map

百度地图核心API现已全面兼容MCP协议,是国内首家兼容MCP协议的地图服务商。

Playwright MCP Server

一个模型上下文协议服务器,它使大型语言模型能够通过结构化的可访问性快照与网页进行交互,而无需视觉模型或屏幕截图。

Magic Component Platform (MCP)

一个由人工智能驱动的工具,可以从自然语言描述生成现代化的用户界面组件,并与流行的集成开发环境(IDE)集成,从而简化用户界面开发流程。

Audiense Insights MCP Server

通过模型上下文协议启用与 Audiense Insights 账户的交互,从而促进营销洞察和受众数据的提取和分析,包括人口统计信息、行为和影响者互动。

VeyraX

一个单一的 MCP 工具,连接你所有喜爱的工具:Gmail、日历以及其他 40 多个工具。

graphlit-mcp-server

模型上下文协议 (MCP) 服务器实现了 MCP 客户端与 Graphlit 服务之间的集成。 除了网络爬取之外,还可以将任何内容(从 Slack 到 Gmail 再到播客订阅源)导入到 Graphlit 项目中,然后从 MCP 客户端检索相关内容。

Kagi MCP Server

一个 MCP 服务器,集成了 Kagi 搜索功能和 Claude AI,使 Claude 能够在回答需要最新信息的问题时执行实时网络搜索。

e2b-mcp-server

使用 MCP 通过 e2b 运行代码。

Neon MCP Server

用于与 Neon 管理 API 和数据库交互的 MCP 服务器

Exa MCP Server

模型上下文协议(MCP)服务器允许像 Claude 这样的 AI 助手使用 Exa AI 搜索 API 进行网络搜索。这种设置允许 AI 模型以安全和受控的方式获取实时的网络信息。